Why Your B2B Marketing Attribution is Wrong (And How Causal Inference Fixes It)

Your B2B marketing dashboard shows conversions, but it can’t tell you what actually caused them. Traditional attribution models—first-touch, last-touch, even multi-touch—assign credit based on correlation, not causation. They tell you which touchpoints appeared before a conversion, not which ones genuinely influenced the decision. For a business allocating six or seven figures across channels, this distinction determines whether you’re scaling what works or amplifying coincidence.

Causal inference attribution applies scientific methodology to isolate true marketing impact. Instead of assuming the last email or webinar “caused” a sale because it happened first, causal methods create control groups, account for confounding variables, and measure incremental lift. This approach answers the critical question every CFO asks: “What would have happened without this campaign?” The difference between correlation and causation becomes the difference between defending your budget and confidently requesting more.

B2B sales cycles complicate attribution further. With multiple stakeholders, 6-12 month decision windows, and dozens of touchpoints, traditional models either oversimplify or drown in complexity. Causal inference cuts through this noise by focusing on interventions—the moments when your marketing changed prospect behavior. This shift from tracking every interaction to measuring genuine influence transforms attribution from a reporting exercise into a strategic advantage. The methods are accessible, the implementation is practical, and the ROI clarity is immediate.

The Fatal Flaw in Traditional B2B Attribution Models

Why Correlation Doesn’t Mean Your Campaign Worked

Here’s the uncomfortable truth: just because two things happen at the same time doesn’t mean one caused the other. Yet most B2B attribution models operate on exactly this faulty logic.

Consider this common scenario: Your sales team closes a major deal, and your attribution platform gives credit to a branded search ad because it was the last click before conversion. The dashboard looks great, and you’re tempted to increase your branded search budget. But here’s what actually happened: The prospect attended your webinar three months ago, received a cold email from your sales team, saw your CEO speak at an industry conference, and then finally searched for your company name to find your website. The branded search didn’t drive the decision—it was simply the navigation method after the decision was already made.

This is the correlation versus causation problem that plagues traditional attribution. When you rely on intent data and touchpoint tracking alone, you’re seeing what happened, not why it happened. Your analytics show that prospects who click branded ads convert at high rates, so the model assumes those ads are effective. In reality, you’re observing an outcome that would have occurred regardless of the ad’s presence.

The financial impact of this misunderstanding is significant. You might allocate budget to channels that merely correlate with conversions while starving the channels that actually cause them. That webinar that started the customer journey? It gets little credit. The branded search ad that served as a digital doorknob? It looks like your star performer. This is why moving beyond simple correlation to establish true causal relationships is essential for accurate marketing measurement and smart budget decisions.

The Long Sales Cycle Problem

B2B sales cycles don’t happen overnight. While B2C purchases can occur in minutes, your typical B2B deal unfolds over 6 to 18 months, sometimes longer for enterprise contracts. This extended timeline wreaks havoc on traditional attribution models that were built for shorter conversion windows.

Consider what happens during this journey: A prospect attends your webinar in January, downloads a whitepaper in March, visits your pricing page in May, and finally converts in September. Which touchpoint deserves credit? Traditional models struggle to answer this accurately because they weren’t designed for such complexity.

The situation gets messier when you factor in multiple stakeholders. B2B purchases typically involve 6 to 10 decision-makers, each interacting with your brand independently. The CFO might read your blog posts, while the IT director attends demos and the CMO evaluates case studies. Traditional attribution treats these as separate journeys, missing the collaborative reality of B2B buying.

Add to this the noise from external factors like budget cycles, competitive pressures, and internal politics that influence timing but leave no digital footprint. Your attribution data captures touchpoints but misses the actual causal relationships driving decisions.

What Causal Inference Attribution Actually Measures

The Counterfactual Question

Traditional B2B marketing attribution tells you which touchpoints were present before a conversion, but it doesn’t answer the critical question: what would have happened if you hadn’t invested in that campaign at all? This is the counterfactual question, and it’s the foundation of causal inference in marketing.

Think of it this way: your company runs a LinkedIn advertising campaign targeting enterprise accounts. Three months later, several of those accounts close deals. Standard attribution gives LinkedIn credit because those companies clicked your ads. But here’s what you don’t know: would those companies have found you anyway through organic search? Were they already considering your solution because a colleague recommended you? Would they have converted without ever seeing that ad?

The counterfactual approach requires comparing what actually happened against what would have happened in an alternate scenario where the campaign didn’t exist. This is challenging in B2B because you can’t rewind time and replay the same quarter without the LinkedIn campaign. However, you can create comparable control groups or use statistical methods to estimate this alternate reality.

For example, if you target 1,000 accounts with your campaign, you might intentionally exclude a similar group of 200 accounts as a control. By comparing conversion rates between these groups, you start measuring true causal impact rather than just correlation. This distinction matters immensely when you’re deciding whether to increase that LinkedIn budget by 50% next quarter.

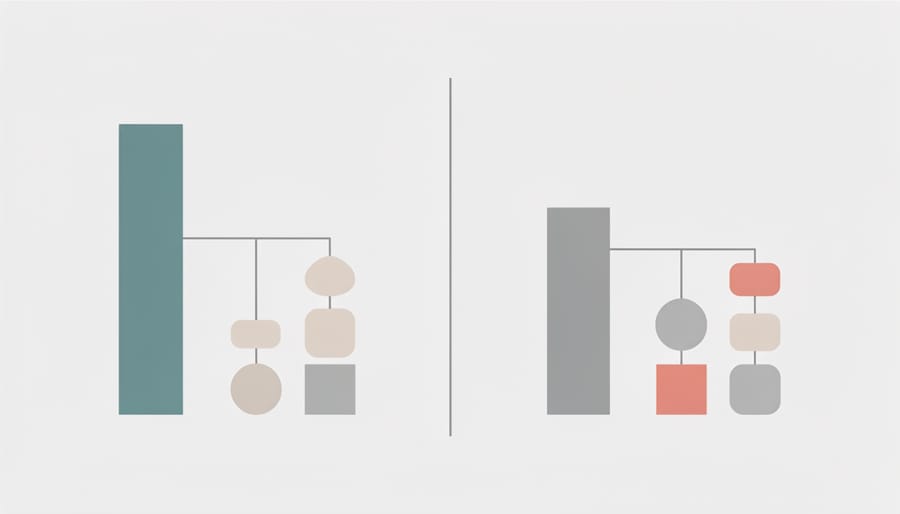

How It’s Different From What You’re Using Now

Most B2B marketers currently rely on attribution models that tell an incomplete story. Here’s what actually separates traditional approaches from causal inference methods:

Traditional last-touch attribution credits the final interaction before conversion. If someone downloads a whitepaper and then requests a demo, the demo request gets all the credit. Multi-touch models distribute credit across touchpoints using predetermined rules, but these rules are arbitrary. First-touch gives 40%, middle touches split 20%, and last-touch gets 40%. Why these percentages? Because someone decided they made sense.

Causal inference attribution works fundamentally differently. Instead of assuming which touchpoints mattered, it uses statistical methods to determine what would have happened without each touchpoint. This approach compares actual outcomes against control groups or counterfactual scenarios, similar to how predictive analytics forecasts future performance based on historical patterns.

The practical difference shows up in your decisions. Traditional models might tell you that webinars generate 30% of your pipeline. Causal inference reveals whether webinars actually cause conversions or if attendees were already likely to convert anyway. This distinction matters when allocating budget.

Consider email campaigns. Traditional attribution counts every email opened before a conversion as contributing value. Causal methods test whether sending that email actually changed the outcome. You discover that your weekly newsletter doesn’t drive conversions among engaged accounts, but your case study emails do.

The decision-making impact is clear. Traditional models optimize for correlation, leading you to invest in channels that look good but don’t move the needle. Causal inference identifies true drivers, letting you cut wasteful spending and double down on tactics that genuinely influence buyer behavior. You stop guessing and start knowing which marketing activities deserve your budget.

Practical Methods for Causal Attribution in B2B

Geo-Based Testing

Geo-based testing offers a practical way to measure campaign impact by comparing similar markets with different marketing exposures. Split your target markets into test and control regions, run campaigns in only the test areas, then measure the difference in results. This approach works particularly well for B2B companies with geographically distributed prospects.

For trade shows, select similar cities or regions based on market size, industry concentration, and historical performance. Attend events in your test markets while skipping comparable shows in control markets. Track lead generation, pipeline velocity, and closed deals across both groups over six to twelve months. The difference reveals your true trade show ROI, accounting for deals that would have happened anyway.

Regional campaigns follow the same logic. Launch your new demand generation program in half your territories while maintaining standard activities in the others. This works especially well for account-based marketing, where you can split target account lists by geography and measure engagement differences.

The key is ensuring your test and control markets are genuinely comparable. Look at historical conversion rates, average deal sizes, sales cycle lengths, and competitive intensity. Automated reporting tools make tracking these metrics across regions straightforward, eliminating manual spreadsheet work.

Run tests for at least one full sales cycle to capture meaningful data. B2B buying journeys take months, so patience pays off with reliable attribution insights that justify your marketing investments.

Holdout Groups and Control Testing

The most reliable way to measure true marketing impact is through holdout testing—randomly withholding your marketing efforts from a segment of your audience and comparing results against those who received them. This approach answers a fundamental question: what would have happened without your marketing investment?

Start with email campaigns by randomly splitting your contact list into treatment and control groups. The treatment group receives your campaign while the control group doesn’t. Track conversion rates, deal velocity, and revenue across both segments over a defined period. The difference represents your true lift, not just correlation. For accuracy, ensure your control group represents at least 10-15% of your total audience and maintain consistency across testing periods.

Apply the same methodology to account-based marketing. Randomly select 15-20% of target accounts as your control group, excluding them from display ads, retargeting, and direct outreach. Monitor organic conversion patterns against accounts receiving full marketing treatment. This reveals whether your ABM strategy genuinely accelerates deals or simply claims credit for inevitable conversions.

Automation becomes essential when scaling holdout testing across multiple campaigns. Use your marketing automation platform to create dynamic segmentation rules that automatically assign contacts to control groups based on predetermined percentages. Set up scheduled reports comparing performance metrics between groups, and establish alerts when lift falls below expected thresholds.

The key consideration: communicate holdout testing plans with sales teams beforehand. They need to understand why certain accounts aren’t receiving marketing support, preventing confusion and maintaining alignment between departments.

Incrementality Testing for Paid Channels

The most reliable way to determine if your paid channels actually work is to run controlled experiments. Think of incrementality testing as a scientific proof: you create two similar groups and expose only one to your ads, then measure the difference in conversions.

Start with geographic holdout tests for channels like LinkedIn or Google Ads. Split your target markets into test and control regions of similar size and characteristics. Run your campaigns in test regions only for 4-6 weeks, then compare conversion rates between the two groups. The difference represents your true incremental lift. For example, if test regions convert at 3% and control regions at 2.5%, your ads drive a 0.5% incremental improvement, not the full 3% your dashboard shows.

For campaigns you can’t split geographically, use time-based holdouts. Run campaigns for two weeks, pause for two weeks, and repeat this pattern over several months. Compare performance during on and off periods, accounting for seasonal variations. This approach works particularly well for retargeting campaigns where you suspect significant overlap with organic conversions.

The key metric is incremental cost per acquisition: divide your total ad spend by only the incremental conversions, not all conversions attributed to the channel. This reveals your true ROI and often shows that channels receiving last-click credit are less efficient than they appear.

Document your testing methodology and share results with stakeholders regularly. This transparency builds trust in your attribution approach and justifies budget decisions with actual evidence rather than correlation-based attribution models.

Implementing Causal Attribution Without Overcomplicating Your Stack

Start With One High-Investment Channel

Start with your highest-investment channel to see the clearest impact from better attribution. This is typically paid search, LinkedIn ads, or content marketing if you’re running a substantial operation there.

Choose one channel where you’re spending at least $2,000 monthly or investing significant staff time. The larger the investment, the more valuable your attribution insights will be.

Here’s your simple three-week test setup:

First, identify your baseline. Pull the last 90 days of conversion data from this channel. Note which campaigns or content pieces your current system credits with conversions. Export this into a spreadsheet with dates, touchpoints, and deal values.

Second, layer in your causal inference approach. For the next 30 days, track additional context: How many times did prospects engage before converting? What other channels did they interact with in the same week? Were there any sales outreach efforts happening simultaneously?

Third, compare results. Look for patterns your original attribution missed. You’ll often find that your high-investment channel works in combination with other efforts rather than driving conversions alone.

This focused approach prevents overwhelm and generates quick wins. You’re not rebuilding your entire attribution system at once. Instead, you’re testing causal thinking on real budget decisions. Once you see results from one channel, you can expand the methodology across your marketing mix. The key is starting where the financial stakes justify the effort to get attribution right.

Automate Data Collection and Reporting

Manual data collection creates bottlenecks that compromise attribution accuracy and consume valuable time. The solution lies in implementing systems that capture and analyze marketing data automatically, enabling real-time insights while freeing your team for strategic work.

Modern automated tracking systems connect your marketing touchpoints directly to your CRM, eliminating data gaps that skew attribution results. These tools capture every interaction across email campaigns, website visits, content downloads, and sales conversations without requiring manual entry. This continuous data flow provides the foundation for accurate causal inference analysis.

Start by integrating your marketing automation platform with your CRM and analytics tools. Configure tracking parameters that follow prospects through their entire journey, from first touch to closed deal. AI-powered automation can then analyze patterns across thousands of customer journeys, identifying which combinations of touchpoints genuinely drive conversions versus those that simply correlate with sales.

Set up automated reporting dashboards that update in real-time, showing attribution metrics across different models simultaneously. This allows you to compare traditional last-touch data against causal inference results, revealing which channels deserve increased investment.

The key is reducing friction in data collection while maintaining data quality. Automated processes eliminate human error and ensure consistent tracking standards across all campaigns. This reliability transforms your attribution analysis from guesswork into actionable intelligence, supporting confident budget decisions based on verifiable cause-and-effect relationships.

What to Measure and When to Act

Track these core metrics to gauge attribution accuracy: cost per opportunity, customer acquisition cost by channel, and revenue influenced per touchpoint. For B2B companies with 90+ day sales cycles, run attribution tests for at least three full cycles—typically six to nine months—before making major budget decisions. This duration ensures you capture seasonal variations and account for deal velocity differences.

Set clear decision thresholds before testing begins. If a channel shows 30% higher cost per closed deal than your target after the minimum test period, consider reallocation. Conversely, channels generating qualified opportunities at 20% below target cost warrant immediate scaling consideration. Monitor leading indicators weekly—form fills, demo requests, sales-qualified leads—but evaluate attribution model performance monthly. When implementing changes, adjust budgets incrementally in 15-20% shifts rather than dramatic cuts. This measured approach protects against false negatives while maintaining statistical validity across your attribution analysis.

Common Mistakes That Invalidate Your Causal Tests

Testing Too Short for Your Sales Cycle

One of the most common pitfalls in B2B attribution testing is running experiments for too short a period. Unlike B2C transactions that often convert within days or hours, B2B sales cycles typically span weeks or months. Testing for just two or three weeks might capture initial touchpoints, but you’ll miss the complete conversion journey and draw misleading conclusions about what actually drives results.

To determine the right test duration, start with your average sales cycle length and add at least 50% more time. If your typical customer takes 60 days from first touch to close, run tests for a minimum of 90 days. This buffer accounts for variations in buyer behavior and ensures you capture outliers who convert faster or slower than average.

Track your data throughout the testing period and look for stabilization in your metrics. When week-over-week changes drop below 10%, you’re likely seeing reliable patterns. For businesses with longer sales cycles exceeding six months, consider using proxy metrics like MQL-to-SQL conversion rates or demo requests to validate your attribution model before waiting for final revenue data. This approach lets you make informed decisions faster while maintaining statistical validity.

Contamination Between Test and Control Groups

Even with careful test design, contamination between your test and control groups can undermine your B2B attribution results. This happens when control group members accidentally receive treatment, or when external factors blur the distinction between groups.

The most common culprits are shared IP addresses at large companies, where one account in your test group triggers retargeting ads that control group contacts see on shared devices. Cross-device tracking creates similar problems when decision-makers access content on both work and personal devices. Your sales team can also inadvertently contaminate tests by mentioning campaigns or content to control group accounts during regular conversations.

Simple prevention strategies make a significant difference. Start by segmenting at the account level rather than individual contacts, especially for enterprise clients sharing network infrastructure. Implement suppression lists in your advertising platforms to exclude control group domains entirely. Brief your sales team before launching tests so they understand which accounts shouldn’t receive specific messaging. Document any contamination you discover during the test period and factor it into your analysis.

Most importantly, automate these safeguards whenever possible. Manual processes lead to human error, but automated suppression rules and account-level segmentation maintain test integrity without requiring constant oversight. This protection ensures your attribution insights reflect genuine causal relationships rather than contaminated data.

The gap between what gets credit and what actually drives results costs B2B marketers millions in misallocated budget every year. Traditional attribution models assign credit based on proximity and correlation, while causal inference reveals the genuine cause-and-effect relationships that power your revenue engine. This distinction matters because it’s the difference between rewarding channels that happen to be present and investing in channels that make things happen.

Causal inference attribution doesn’t require a complete overhaul of your marketing operations. The methodology integrates with your existing systems and enhances your current reporting frameworks. By implementing controlled experiments and proper statistical analysis, you gain clarity on which investments truly move the needle for your business.

Start small and build momentum. Choose one campaign running across multiple channels and test it using causal methods. Set up a simple holdout group or implement geographic testing to measure incremental impact. Compare these results against your traditional attribution reports and observe the differences. You’ll likely discover that some channels deserve more credit than they’re receiving, while others have been coasting on proximity alone.

The transition to causal inference attribution empowers you to defend your marketing budget with confidence, automate smarter allocation decisions, and communicate ROI to stakeholders with scientific backing. Ready to implement causal inference in your attribution strategy? Review your current campaign data, identify high-investment channels worth testing, and begin with one controlled experiment this quarter.

Leave a Reply